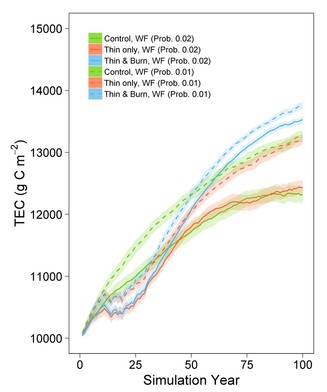

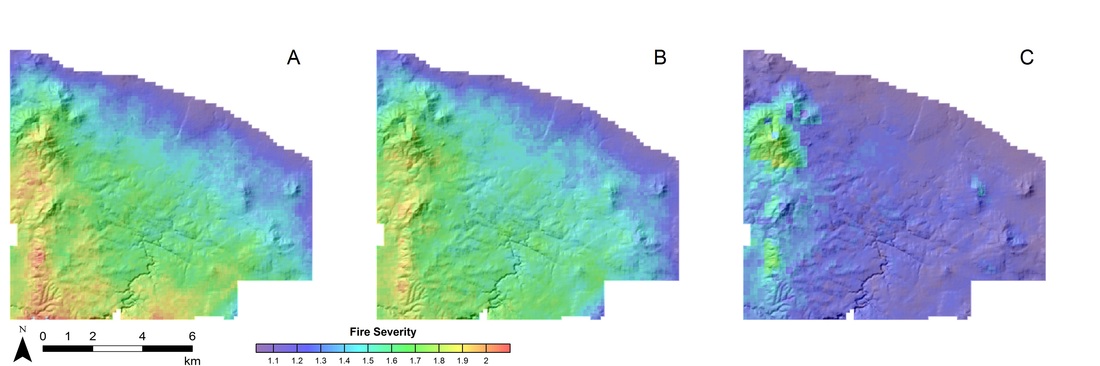

Hurteau et al. (2008) Hurteau et al. (2008) There is an on-going debate in both the policy and scientific communities about the impacts that forest treatments to reduce high-severity wildfire risk have on carbon storage in forests. Treatments typically involve cutting down trees to break up the fuel that allows fire to move from the forest floor to the canopy and prescribed burning to reduce surface fuels. Both of these management tools remove and emit carbon back to the atmosphere, a fact that is well established. It is also well established that these treatments are effective at reducing high-severity wildfire risk by changing fire behavior. The result, when fire burns through a treated forest, we tend to have surface fires that kill fewer trees and result in lower emissions of carbon back to the atmosphere. The reason that there is a debate about whether treatments result in more or less forest carbon is because we cannot predict when and where wildfires will burn. This means that we typically have to treat more forest than will burn in wildfire and treated areas that don’t burn store less carbon than untreated forests (see Campbell et al. 2012). Much like in real estate – it’s all about location, location, location. In our most recent paper we asked the question: how does the unpredictable nature of fire occurrence alter carbon dynamics between treated and untreated forests? With funding from the Department of Defense’s Strategic Environmental Research and Development Program we conducted a study in Ponderosa pine forest at Camp Navajo in Arizona. We used field data and the LANDIS-II model to run a series of simulations. We simulated three different conditions: 1) no treatment (control), 2) thinning small trees (thin only), 3) thinning small trees and prescribed burning every 10 years (thin and burn). We also simulated two different chances of wildfire occurring, 1 in 50 and 1 in 100; which means that in any given year, there is either a 2% (1 in 50) or 1% (1 in 100) chance that a wildfire will occur. As we expected and has been demonstrated before, the amount of carbon in the treated forest is lower than the control in the absence of wildfire. However, when we did simulate wildfire, the thin and prescribed burn treatment stored more carbon than the control after several decades. In the 1 in 50 chance wildfire simulation (solid lines), the thin and burn treatment stored more carbon than the control beginning in year 40 and in the 1 in 100 (dashed lines) chance simulation it was in year 51. The treatment that only thinned small trees ended up with about the same amount of carbon as the control in both cases. These results are because of treatment effects on altering fire behavior. When a fire burns through the forest, we measure its effect using fire severity. Higher severity means that more trees are killed by the fire. When we calculated the mean fire severity for the different simulations, we found that when the forest was thinned and burned, mean severity was consistently lower. We also calculated the coefficient of variation (CV) for fire severity. CV is a measure of how variable the results are and when the CV is high, fires at any given location ranged from low to high severity. Thinning alone did little to change how variable the results were and had CV values similar to the control. That is because thinning alone doesn’t deal with the surface fuels the way prescribed burning does. When we thinned and burned, CV was much lower. This means that the mean fire severity was lower and that any given fire tended to be less severe in the thin and burn scenario.

While it might be argued that we need to store more carbon now to limit the impacts of climate change and because our study showed that the forest stores less carbon for the better part of five decades that we shouldn’t do anything. However, our wildfire frequencies were on the low end of the range of frequencies for this area. Changing climate is projected to increase the frequency of large wildfires and this presents a bigger risk for storing carbon in forests. In short, treating forests has upfront carbon costs, but stabilizes the remaining carbon over the long-term.

0 Comments

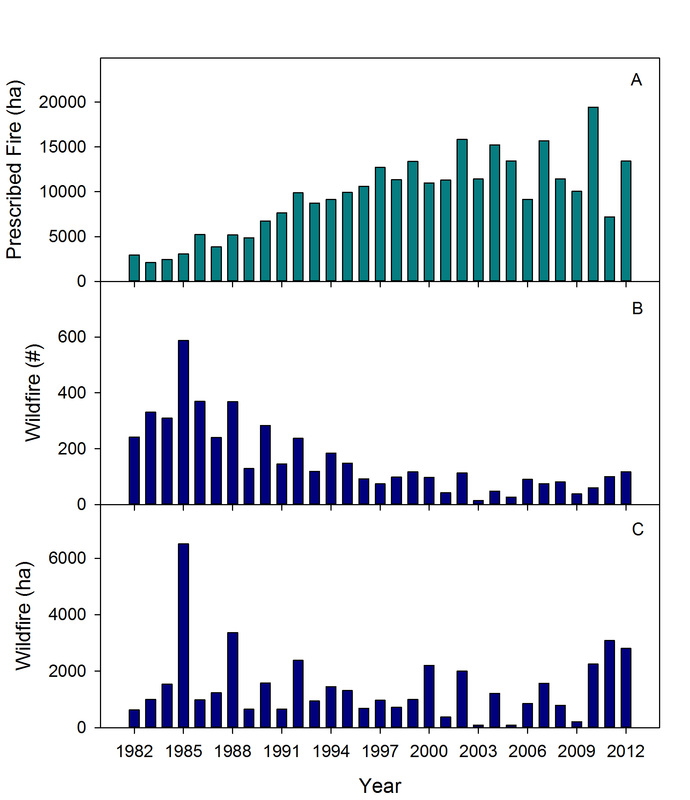

Fire is a disturbance that affects many ecosystems on the planet; the frequency with which different ecosystems burn is a function of climate and the availability of plant material to burn. Fires typically occur when temperatures are higher and relative humidity is lower. These two variables cause the moisture in plant material to decrease, making it more flammable. Without a decrease in fuel moisture, more energy is required than is available to evaporate all of the water before the fuel will burn. This is precisely why you can’t cut a live tree into fire wood and stick it right into the stove to heat your house. You have to let it “season” so the moisture content drops. Many forest types historically experienced frequent fire. These forests are characterized in part by the fact that at some point during the year, conditions are right for an ignition to turn into a fire. These forests are typically fuel limited, meaning that if a fire occurred recently there is unlikely to be sufficient fuel left to carry the fire. The longleaf pine forests of the southeastern US are a frequent fire forest, but they typically aren’t fuel limited because of the long growing season and rainy winters. This allows vegetation to recover quickly after a fire. Historically these forests experienced fire every 2-4 years. When we consistently prevent fires from burning, plant material or fuel builds up and unplanned ignition events, think lightening, a burning cigarette, or on a military installation – artillery, can cause wildfires. In our most recent paper, led by Rob Addington of the Colorado State Chapter of The Nature Conservancy, we investigated the relationship between prescribed burning and wildfire activity at Fort Benning in Georgia. Natural resource managers at Fort Benning have been restoring longleaf pine forests and fire as a process to maintain them. You can read more about it in a previous post. We used a 30-year record of wildfire and prescribed fire occurrence to answer the question – does increasing prescribed fire use reduce wildfire frequency and area burned by wildfire? We also wanted to understand how drought might influence this relationship. We found that as the number of hectares (1 hectare = 2.47 acres) burned with prescribed fire increased over the 30-year period, the number of wildfires decreased by about half. When we included drought and area burned by prescribed fire in the previous year, we explained 80% of the year-to-year variation in number of wildfires. When we did the same with area burned by wildfire we explained 54% of the year-to-year variation. Figure: The top panel (A) is the number of hectares burned by prescribed fire each year. The middle panel (B) is the total number of wildfires each year. The bottom panel (C) is the number of hectares burned by wildfire each year. As we previously found, regular burning is important for restoring and maintaining longleaf pine forest. This research shows that by restoring fire using prescribed burning, managers can also reduce the number of and area burned by wildfire.

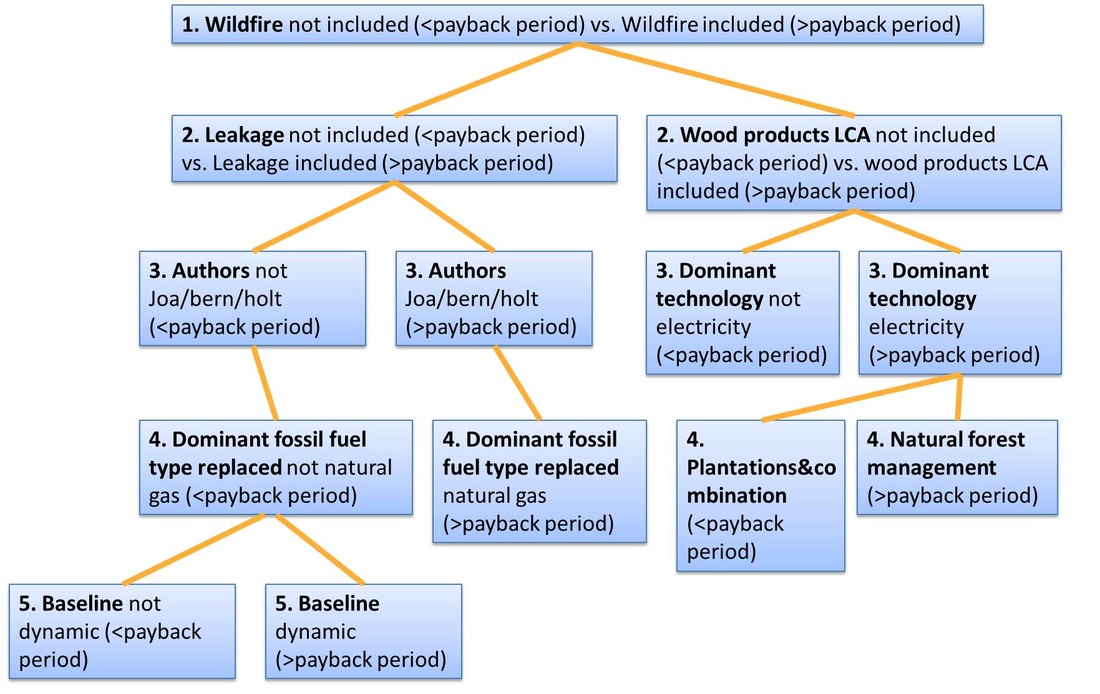

The Intergovernmental Panel on Climate Change in their 5th Assessment Report stated: “Human influence on the climate system is clear…” and “Warming of the climate system is unequivocal…”. In response to these facts, there has been considerable research into alternative energy sources as a way to reduce our emissions of greenhouse gases from fossil fuels. We already use plant material (biomass) as a source to generate everything from liquid fuels (e.g. ethanol from corn or sugar cane) to feedstocks that we can burn for heat or electricity. Humans have been burning wood for millennia to produce heat for cooking and warmth. Because of the rising level of greenhouse gases in the atmosphere from burning fossil fuels, there has been considerable research on using forests to provide a biomass feedstock for producing energy on a large scale. Burning biomass to produce energy still emits greenhouse gases to the atmosphere, but the thinking goes that since plants sequester carbon from the atmosphere, burning them is carbon neutral because plants growing somewhere else on a given day will make up for the carbon emitted on the same day. This is certainly a reasonable hypothesis, but when it comes to forest biomass energy, there has been a lot of scientific debate because trees take a long time to grow (see work by Gunn and colleagues and Galik and colleagues). Some argue that harvesting a forest for biomass energy creates a carbon debt because if the harvested forest is say 100 years old, it will take 100 years for a regenerating forest to recover the carbon emitted when we produce energy from burning the trees we harvested. Quantifying the carbon balance of using forests for a biomass feedstock is not a simple task. There are many factors that have to be considered. As an example, if the forest is likely to be harvested for wood products, the demand for wood products doesn’t go away if the trees are instead harvested for biomass energy. This could cause forests elsewhere to be harvested for wood products, a concept known as leakage. However, emerging biomass markets could also increase forest carbon storage if they encourage additional forest planting or avoiding land use change from forest to some other land use. Another issue to consider is that forests and the carbon they contain are not static in time. Trees grow and die. Year-to-year variability in temperature and precipitation can affect both growth and mortality rates. Disturbances such as wildfire and insect outbreaks can kill trees. These and many other factors affect the amount of carbon stored in and removed from the atmosphere by forests and are all part of the idea called baseline. The baseline is the amount of carbon the forest would store in the absence of the biomass project, and how much carbon would be emitted to generate energy using fossil fuels (without biomass). There has been considerable debate about the type of baseline to use. Some have argued for the use of a static baseline and others for a dynamic baseline. A static baseline uses the amount of carbon stored in the forest before the project and assumes it remains unchanged over time, whereas a dynamic baseline assumes that the carbon stock will vary as the forest changes. In a recent paper led by Thomas Buchholz of the Spatial Informatics Group, we analyzed the results of 38 previously published studies on this topic that included a measure of carbon payback period (how long until the carbon debt is gone and the forest biomass project is carbon neutral). The carbon payback period for the studies we included in our analysis ranged from 0 to 8000 years. We identified 20 different attributes to classify these studies. They included things like type of forest (plantation or natural), fossil fuel energy source displaced (coal, natural gas, etc), and whether or not the study included natural disturbance when modeling forests. Once we classified all of the studies based on these attributes, we ran an analysis to determine which attributes were most influential for determining the payback period. Given all the debate around baseline, our results were surprising. The most influential factor was whether or not the study included the effects of wildfire in the quantification of the carbon payback period for a project. Those projects that did include wildfire had a longer payback period. Other attributes like leakage and if the study included a life cycle assessment of wood products were also influential factors for determining the length of the payback period. The type of baseline (reference point or dynamic) was only influential after many other attributes were accounted for and only for a handful of the studies we evaluated. This figure shows the outcome of our classification and regression tree analysis. The importance of different attributes decreases as you move from the top to the bottom.

Given the importance of disturbances, such as wildfire, in forests, our results make clear that we cannot neglect disturbance when quantifying the carbon payback period of a forest biomass project. Thinning forests to reduce the amount of trees and reduce the risk of wildfire is a common management strategy (see previous posts). Cutting trees to reduce wildfire risk removes carbon from the forest and we note in the paper that the payback period can vary considerably in length depending on the driver of forest thinning. If the production of biomass energy is the reason for thinning and reduced wildfire risk is the by-product, carbon payback periods can be longer. However, when thinning to reduce fire risk generates biomass and this biomass would be generated even in the absence of a biomass market, carbon payback periods can be considerably shorter. This is in large part because when small trees are cut to reduce the risk of wildfires, they are often piled and burned. Our finding about the influence of the cause of thinning is similar to that noted in a recent WRI report, that “waste” products from plant production, including timber processing, are a potential source of energy that will reduce greenhouse gas emissions. We also concluded from our study that there are a number of attributes that can be standardized in their evaluation to make cross-study comparisons more of an apples-to-apples comparison. While our study did not provide a definitive conclusion about the carbon neutrality of forest biomass energy, it does provide a framework such that future studies can be analyzed in aggregate to determine if a conclusion can be drawn on this topic for all forest biomass projects. |

Details

Archives

October 2023

Categories

All

|

RSS Feed

RSS Feed